Our ability to cram ever-smaller transistors onto a chip has enabled today’s age of ubiquitous computing. But that approach is finally running into limits, with some experts declaring an end to Moore’s Law and a related principle, known as Dennard’s Scaling.

Those developments couldn’t be coming at a worse time. Demand for computing power has skyrocketed in recent years thanks in large part to the rise of artificial intelligence, and it shows no signs of slowing down.

Now Lightmatter, a company founded by three MIT alumni, is continuing the remarkable progress of computing by rethinking the lifeblood of the chip. Instead of relying solely on electricity, the company also uses light for data processing and transport. The company’s first two products, a chip specializing in artificial intelligence operations and an interconnect that facilitates data transfer between chips, use both photons and electrons to drive more efficient operations.

“The two problems we are solving are ‘How do chips talk?’ and ‘How do you do these [AI] calculations?’” Lightmatter co-founder and CEO Nicholas Harris PhD ’17 says. “With our first two products, Envise and Passage, we’re addressing both of those questions.”

In a nod to the size of the problem and the demand for AI, Lightmatter raised just north of $300 million in 2023 at a valuation of $1.2 billion. Now the company is demonstrating its technology with some of the largest technology companies in the world in hopes of reducing the massive energy demand of data centers and AI models.

“We’re going to enable platforms on top of our interconnect technology that are made up of hundreds of thousands of next-generation compute units,” Harris says. “That simply wouldn’t be possible without the technology that we’re building.”

From idea to $100K

Prior to MIT, Harris worked at the semiconductor company Micron Technology, where he studied the fundamental devices behind integrated chips. The experience made him see how the traditional approach for improving computer performance — cramming more transistors onto each chip — was hitting its limits.

“I saw how the roadmap for computing was slowing, and I wanted to figure out how I could continue it,” Harris says. “What approaches can augment computers? Quantum computing and photonics were two of those pathways.”

Harris came to MIT to work on photonic quantum computing for his PhD under Dirk Englund, an associate professor in the Department of Electrical Engineering and Computer Science. As part of that work, he built silicon-based integrated photonic chips that could send and process information using light instead of electricity.

The work led to dozens of patents and more than 80 research papers in prestigious journals like Nature. But another technology also caught Harris’s attention at MIT.

“I remember walking down the hall and seeing students just piling out of these auditorium-sized classrooms, watching relayed live videos of lectures to see professors teach deep learning,” Harris recalls, referring to the artificial intelligence technique. “Everybody on campus knew that deep learning was going to be a huge deal, so I started learning more about it, and we realized that the systems I was building for photonic quantum computing could actually be leveraged to do deep learning.”

Harris had planned to become a professor after his PhD, but he realized he could attract more funding and innovate more quickly through a startup, so he teamed up with Darius Bunandar PhD ’18, who was also studying in Englund’s lab, and Thomas Graham MBA ’18. The co-founders successfully launched into the startup world by winning the 2017 MIT $100K Entrepreneurship Competition.

Seeing the light

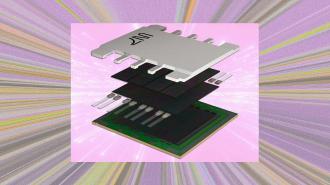

Lightmatter’s Envise chip takes the part of computing that electrons do well, like memory, and combines it with what light does well, like performing the massive matrix multiplications of deep-learning models.

“With photonics, you can perform multiple calculations at the same time because the data is coming in on different colors of light,” Harris explains. “In one color, you could have a photo of a dog. In another color, you could have a photo of a cat. In another color, maybe a tree, and you could have all three of those operations going through the same optical computing unit, this matrix accelerator, at the same time. That drives up operations per area, and it reuses the hardware that’s there, driving up energy efficiency.”

Passage takes advantage of light’s latency and bandwidth advantages to link processors in a manner similar to how fiber optic cables use light to send data over long distances. It also enables chips as big as entire wafers to act as a single processor. Sending information between chips is central to running the massive server farms that power cloud computing and run AI systems like ChatGPT.

Both products are designed to bring energy efficiencies to computing, which Harris says are needed to keep up with rising demand without bringing huge increases in power consumption.

“By 2040, some predict that around 80 percent of all energy usage on the planet will be devoted to data centers and computing, and AI is going to be a huge fraction of that,” Harris says. “When you look at computing deployments for training these large AI models, they’re headed toward using hundreds of megawatts. Their power usage is on the scale of cities.”

Lightmatter is currently working with chipmakers and cloud service providers for mass deployment. Harris notes that because the company’s equipment runs on silicon, it can be produced by existing semiconductor fabrication facilities without massive changes in process.

The ambitious plans are designed to open up a new path forward for computing that would have huge implications for the environment and economy.

“We’re going to continue looking at all of the pieces of computers to figure out where light can accelerate them, make them more energy efficient, and faster, and we’re going to continue to replace those parts,” Harris says. “Right now, we’re focused on interconnect with Passage and on compute with Envise. But over time, we’re going to build out the next generation of computers, and it’s all going to be centered around light.”

Republished with permission of MIT News. Read the original article.