Google Maps will soon offer another perspective on our cities via “immersive view.”

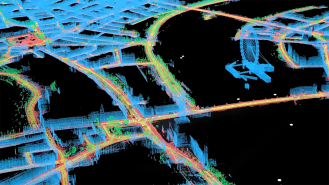

Existing somewhere in between satellite and street, immersive view uses AI to stitch together billions of Google’s satellite and Street View images to render a kind of drone’s-eye-view of the cityscape.

Immersive view uses AI to stitch together billions of Google’s satellite and Street View images to render a kind of drone’s-eye-view of the cityscape

“We’re able to fuse those together, so that we can actually understand, okay, these are the heights of the buildings,” Google VP of engineering Liz Reid told The Verge’s David Pierce. “How do we combine that with Street View? How do we combine it with aerial view to make something that feels much more like you were there?”

Immersive view will allow users to go from an overhead perspective down to street level, something akin to “playing a video game on medium graphics set in a precisely scaled real world,” as Pierce put it.

Swooping over neighborhoods, around famous landmarks, and down streets, users will also be able to toggle on Google Maps’ other layers, viewing places at various times of day and in differing weather conditions and getting reports on the real-time busyness of businesses and traffic conditions, Google Maps VP Miriam Daniel wrote in a blog post.

You can even duck your head into a digital rendering of restaurants, a feature which drew applause when demonstrated at Google I/O, the company’s annual developer conference.

Immersive view will rollout later this year for Tokyo, San Francisco, New York, Los Angeles, and London.

“It’s in the physical and geospatial information around us” that much which is knowable about the world can be found, Alphabet CEO Sundar Pichai said during his I/O keynote speech.

Immersive view had been in the works for a while, but only recently has it become ready for release, although at first only in select neighborhoods of Tokyo, San Francisco, New York, Los Angeles, and London.

“It’s a thing where we had demos years ago, and it was like, ‘oh, here’s the thing,’ but it didn’t really work,” Reid told The Verge. “Now the technology has come a long way into making it feel pretty natural.”

Immersive view is scheduled to rollout later this year, and the company says more cities will soon follow.

We’d love to hear from you! If you have a comment about this article or if you have a tip for a future Freethink story, please email us at [email protected].