Plenty of people are willing to predict when we’ll have autonomous cars — heck, Elon Musk does it publicly all the time — but two accomplished computer scientists are putting their money where their mouths are.

“I bet [Jeff Atwood] $10k that by January 1st, 2030, completely autonomous self-driving cars meeting SAE J3016 level 5 will be commercially available for passenger use in major cities,” tweeted John Carmack, consulting CTO at VR company Oculus.

Atwood, co-founder of the popular Q&A website network Stack Exchange, elaborated on the bet and his position on his blog, Coding Horror, noting that the loser will pay the money to the charity of the winner’s choice.

So, what needs to happen for one of these guys to walk away with $10,000 for their favorite nonprofit?

Level 5 autonomy

As Carmack states in his tweet, to win the bet, there has to be a car with “Level 5″ autonomy that people can pay to use, so let’s break that down.

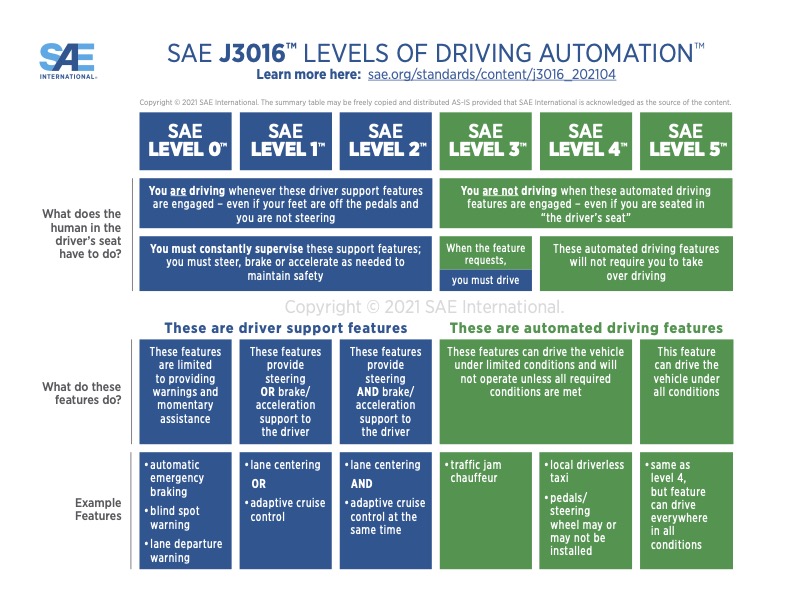

In 2014, the Society of Automobile Engineers (SAE) published the industry standard for classifying autonomous cars. According to it, every vehicle falls into one of six possible levels of autonomy, ranging from 0 to 5.

Cars at Levels 0, 1, and 2 have some driver assistance features — blind spot warnings, adaptive cruise control, etc. — but a human is always supervising the features and in control of the car.

Tesla’s Autopilot is an example of a Level 2 system, since it can more or less drive itself on the highway, but still requires the driver to be alert and in control.

Every vehicle falls into one of six possible levels of autonomy.

Levels 3 and 4 vehicles have features that allow the car to drive itself under certain conditions. Waymo’s driverless taxis and General Motors’ Cruise Origin feature Level 4 systems and are capable of driving themselves in certain cities, routes, or weather conditions.

That brings us to Level 5 autonomy. These vehicles can drive everywhere, under all conditions, without ever getting any help from a human — a passenger gets in, sets any destination, and can then completely ignore the vehicle for the duration of their trip.

Under the terms of the $10,000 bet, Carmack will win if people in any of the U.S.’s top 10 most populous cities can use such a car by 2030.

The over/under

While Elon Musk is perpetually confident that fully autonomous cars are just around the bend, other experts think vehicles with Level 5 autonomy are still decades away.

Even though there has been tremendous progress in the last decade moving from Level 0 to Level 4, that final jump to Level 5 may prove to be harder than the rest put together.

Some pessimists think they’ll never get here, and the hurdles are daunting: designing a Level 5 driving system is a huge technological challenge, due to the incredible number of constantly changing variables involved (others drivers, pedestrians, weather conditions, construction, etc.).

“Fully autonomous vehicles are a fascinating, incredibly challenging computer science problem.”

Jeff Atwood

Even something as simple as nature can throw a self-driving car’s AI for a loop.

“I can show [a self-driving algorithm] a million images of a stop sign, and it will learn what a stop sign is from those images,” Missy Cummings, director of Duke University’s Humans and Autonomy Laboratory, told Marketplace Tech. “But if it sees a stop sign that doesn’t match exactly those images, then it can’t recognize it.”

“This is a huge problem, because if a strand of kudzu leaves starts to grow across just the top 20% of a stop sign … it doesn’t recognize it, because it’s never seen a stop sign with one strand of kudzu leaves across it,” she continued.

Atwood is clearly aware of this difficulty — he is betting against Level 5 autonomy by 2030, after all — but he’s still optimistic that someone will prove him wrong.

“[F]ully autonomous vehicles are a fascinating, incredibly challenging computer science problem, and I want everyone reading this to take it as just that, a challenge,” he wrote.

“Make it happen by 2030, and I’ll be popping champagne along with you and everyone else!” he added.

We’d love to hear from you! If you have a comment about this article or if you have a tip for a future Freethink story, please email us at [email protected].