Researchers in California have unveiled two new brain-computer interfaces (BCIs) that translate brain signals into words. In two people who can no longer talk on their own, the devices enabled “speech” at speeds up to four times faster than any previous devices.

“It is now possible to imagine a future where we can restore fluid conversation to someone with paralysis, enabling them to freely say whatever they want to say with an accuracy high enough to be understood reliably,” said Francis Willett, who co-authored a study of one of the devices.

The challenge: Brain injuries, neurological disorders, strokes, and other health issues have robbed countless people of their ability to speak — even though they understand language and know what they want to say, their body just doesn’t cooperate.

Pat Bennett is one of them.

The 68 year old was diagnosed with amyotrophic lateral sclerosis (ALS) about a decade ago, and as her disease progressed, she lost the ability to move the muscles needed to produce decipherable speech. She can still type with her fingers, but the process is getting increasingly difficult.

“It is now possible to imagine a future where we can restore fluid conversation to someone with paralysis.”

Francis Willett

Ann Johnson is another.

In 2005, she suffered a brain stem stroke that left her completely paralyzed. Now 47 years old, she can make small movements of her head and parts of her face, but still can’t speak. She uses an assistive device to spell out the words she wants to say, at a rate of just 14 words per minute (wpm), but it’s far slower than the average talking speed of 160 wpm.

What’s new? Bennett and Johnson have now regained their abilities to “speak” at speeds averaging 60 to 80 wpm thanks to new speech BCIs, which use a combination of brain implants and trained computer algorithms to translate thoughts into text.

The previous record for speech with a BCI was just 18 wpm.

Bennett’s system was developed by a team at Stanford University, while Johnson’s was the work of researchers at UC San Francisco. The Stanford study was previously shared on the preprint server bioRXiv, but both groups published papers on their devices in the peer-reviewed journal Nature on August 23.

How they work: In 2022, Bennett underwent surgery to have four sensors implanted in the outermost layer of her brain in areas known to play a role in speech. Gold wires running from the brain implants connect to a port in her skull.

After connecting the port to a computer, she spent about 100 hours — across 25 sessions — attempting to repeat sentences from a large dataset.

By analyzing her brain activity during these sessions, a computer algorithm learned what it looked like in Bennett’s brain when she tried to say each of the 39 basic phonemes — units of sound, such as “sh” or “t” — in the English language.

Now, when Bennett tries to speak, the system sends its best guess as to what phonemes she’s thinking about to a language model, which predicts the words and displays them on a screen.

“This system is trained to know what words should come before other ones, and which phonemes make what words,” said Willett. “If some phonemes were wrongly interpreted, it can still take a good guess.”

Using the system, Bennett can “speak” at an average rate of 62 wpm. When restricted to just a 50-word vocabulary, the speech BCI has an error rate of 9.1%. When the vocabulary is expanded to 125,000 words, the rate is 23.8%, meaning about one in four words is wrong.

That sounds high, but it’s also a huge step forward. The BCI that previously held the speed record had a median error rate of 25% on a 50-word vocabulary.

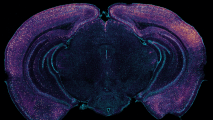

Meanwhile, Johnson’s team placed just one sensor on the surface of her brain, a less-invasive procedure, but it contained about the same total number of electrodes as the four sensors used by the Stanford group.

She then spent weeks repeating phrases from a 1,024-word dataset to train her algorithm to predict which of the 39 English phonemes she was trying to say. By the end of the training, the system could predict the words from the dataset that she was trying to say at a median speed of 78 wpm, with an error rate of 25.5%.

Rather than just having the words appear as text on a screen, though, the UCSF team took their speech BCI a step further, pairing it with a digital avatar of Johnson’s face and a synthetic voice trained to sound just like her.

To train the avatar to make the right facial expressions for Johnson, the researchers recorded her brain activity as she attempted to make the expressions, over and over again.

To recreate Johnson’s voice, they fed an AI a recording of her speaking at her wedding, which took place just months before her life-changing stroke.

“When Ann first used this system to speak and move the avatar’s face in tandem, I knew that this was going to be something that would have a real impact,” said researcher Kaylo Littlejohn.

Looking ahead: Both Bennett and Johnson were required to undergo risky brain surgery to have their sensors implanted, and unfortunately, over time, scar tissue forming around the brain implants is likely to begin interfering with the brain signals.

The error rates are still relatively high, too, and both systems are only usable in the lab and require many hours of training.

But wireless BCIs and brain implants that don’t lead to scar tissue or require invasive procedures are in development, and the AIs powering speech BCIs are getting progressively better, which could lead to lower error rates and faster training times.

In the future, it’s not hard to imagine that these technologies, combined with digital avatars and improved synthetic voices, could help countless people communicate effortlessly and expressively, just as they could before disease or injury took away their voices.

We’d love to hear from you! If you have a comment about this article or if you have a tip for a future Freethink story, please email us at [email protected].